|

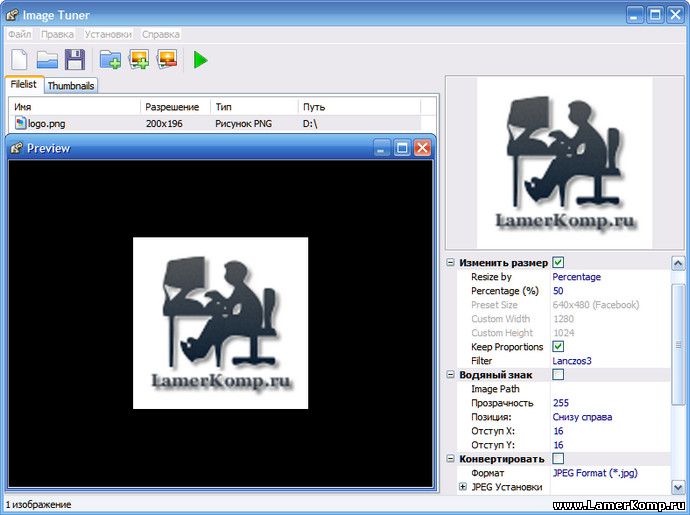

12/2/2023 0 Comments Image tunerThe keras tuner package takes care of the rest, running multiple trials until we converge on the best set of hyperparameters.We then define an instance of either Hyperband, RandomSearch, or BayesianOptimization.As we implement our model architecture, we define what ranges we want to search over for a given parameter (e.g., # of filters in our first CONV layer, # of filters in the second CONV layer, etc.).Libraries such as keras tuner make it dead simple to implement hyperparameter optimization into our training scripts in an organic manner: While this method worked well (and gave us a nice boost in accuracy), the code wasn’t necessarily “pretty.”Īnd more importantly, it doesn’t make it easy for us to tune the “internal” parameters of a model architecture (e.g., the number of filters in a CONV layer, stride size, size of a POOL, dropout rate, etc.). Last week, you learned how to use scikit-learn’s hyperparameter searching functions to tune the hyperparameters of a basic feedforward neural network (including batch size, the number of epochs to train for, learning rate, and the number of nodes in a given layer). What is Keras Tuner, and how can it help us automatically tune hyperparameters?įigure 1: Using Keras Tuner to automatically tune the hyperparameters to your Keras and TensorFlow models ( image source). We’ll wrap up this tutorial with a discussion of our results. A driver script that glues all the pieces together and allows us to test various hyperparameter optimization algorithms, including Bayesian optimization, Hyperband, and traditional random search.The model architecture definition (which we’ll be tuning the hyperparameters to, including the number of filters in the CONV layer, learning rate, etc.).We have several Python scripts to review today, including:

We’ll then configure our development environment and review our project directory structure. In the first part of this tutorial, we’ll discuss the Keras Tuner package, including how it can help automatically tune your model’s hyperparameters with minimal code. Looking for the source code to this post? Jump Right To The Downloads Section Easy Hyperparameter Tuning with Keras Tuner and TensorFlow To learn how to tune hyperparameters with Keras Tuner, just keep reading. Can boost accuracy with minimal effort on your part.Implements novel hyperparameter tuning algorithms.Integrates into your existing deep learning training pipeline with minimal code changes.As we’ll see, utilizing Keras Tuner in your own deep learning scripts is as simple as a single import followed by single class instantiation - from there, it’s as simple as training your neural network just as you normally would!īesides ease of use, you’ll find that Keras Tuner: However, there are more advanced hyperparameter tuning algorithms, including Bayesian hyperparameter optimization and Hyperband, an adaptation and improvement to traditional randomized hyperparameter searches.īoth Bayesian optimization and Hyperband are implemented inside the keras tuner package. Last week we learned how to use scikit-learn to interface with Keras and TensorFlow to perform a randomized cross-validated hyperparameter search. Easy Hyperparameter Tuning with Keras Tuner and TensorFlow (today’s post).Hyperparameter tuning for Deep Learning with scikit-learn, Keras, and TensorFlow (last week’s post).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed